Dataset Example & Action Space

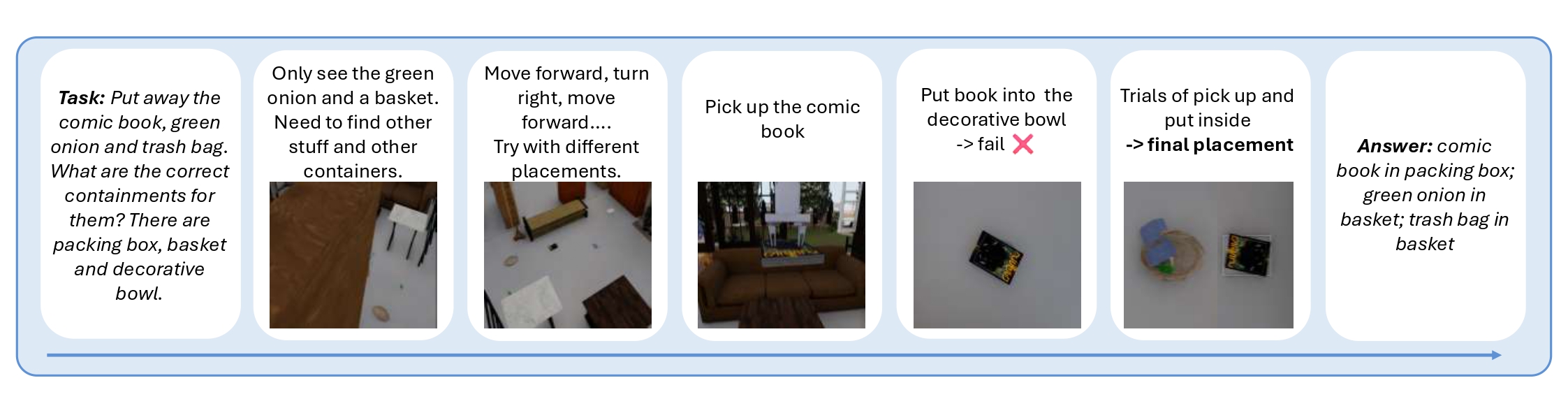

A worked task instance ("put away the comic book, green onion, trash bag") alongside ESI-Bench's unified action vocabulary spanning locomotion, perception, and manipulation.

Spatial intelligence unfolds through a perception–action loop: agents act to acquire observations, and reason about how observations vary as a function of action. Rather than passively processing what is seen, they actively uncover what is unseen — occlusion, dynamics, containment, and functionality — beyond the reach of passive sensing.

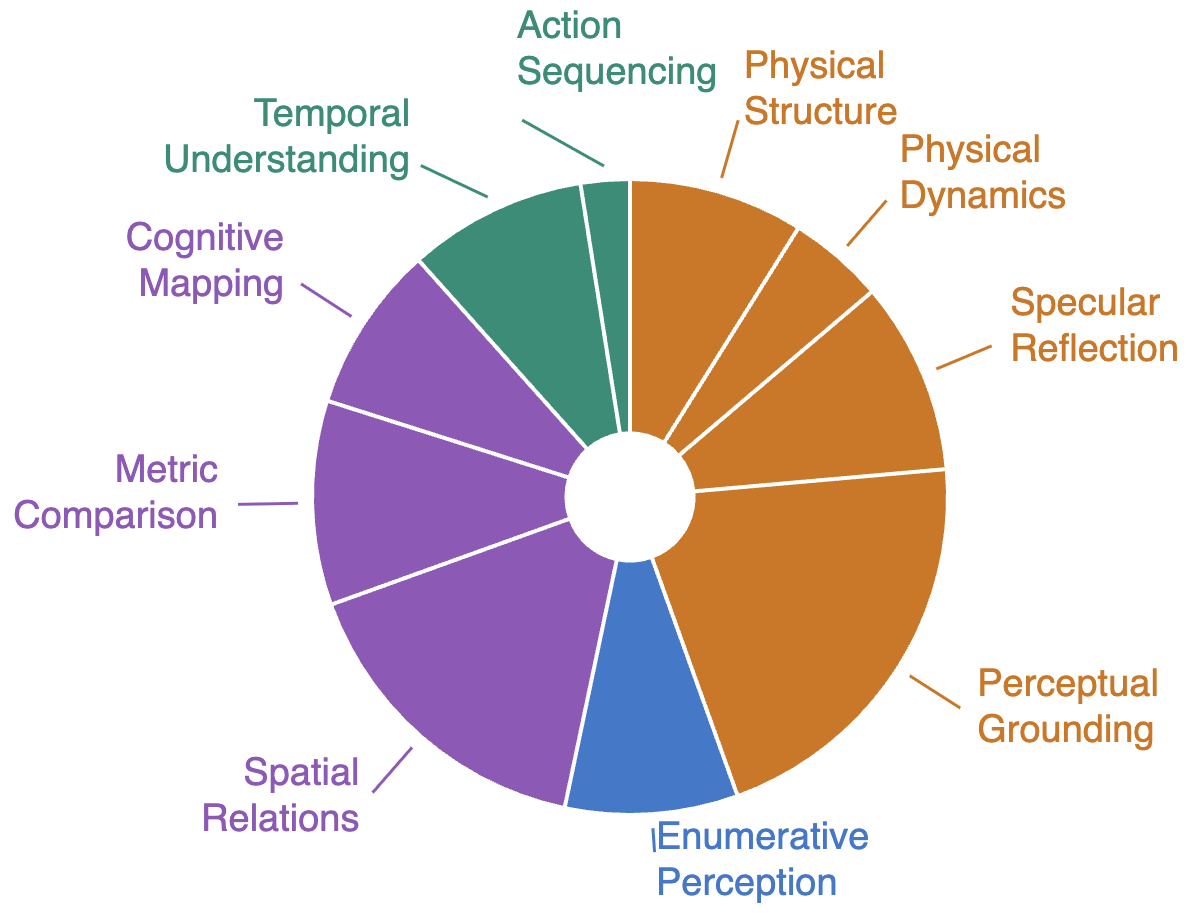

We take a step beyond prior formulations of spatial intelligence, which often emphasize passive perception or assume access to oracle observations, by recasting the observer as an actor. We introduce ESI-Bench, a comprehensive benchmark for embodied spatial intelligence spanning 10 task categories and 29 subcategories built on OmniGibson, grounded in Spelke's core knowledge systems. Agents must decide what abilities to deploy — perception, locomotion, and manipulation — and how to act to answer questions that cannot be resolved from passive observation alone.

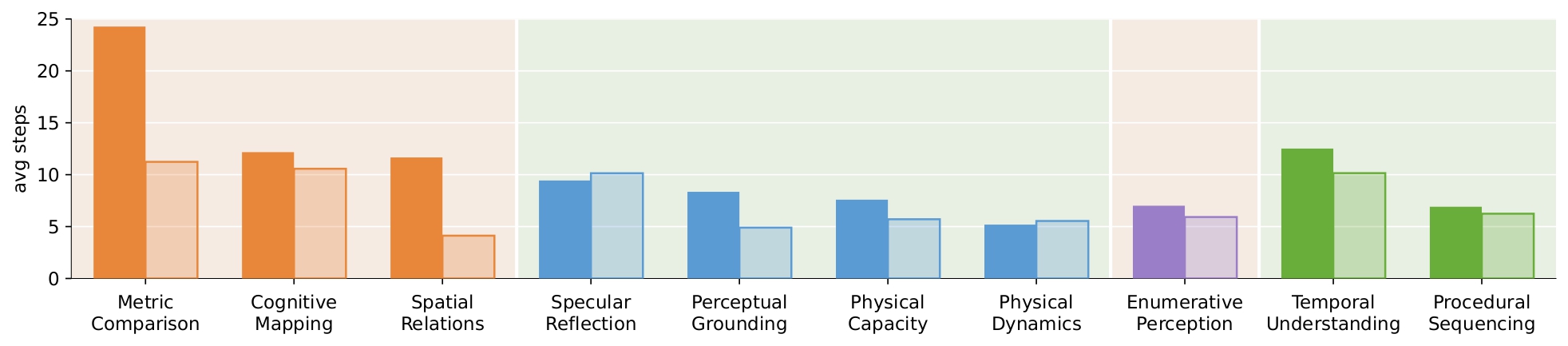

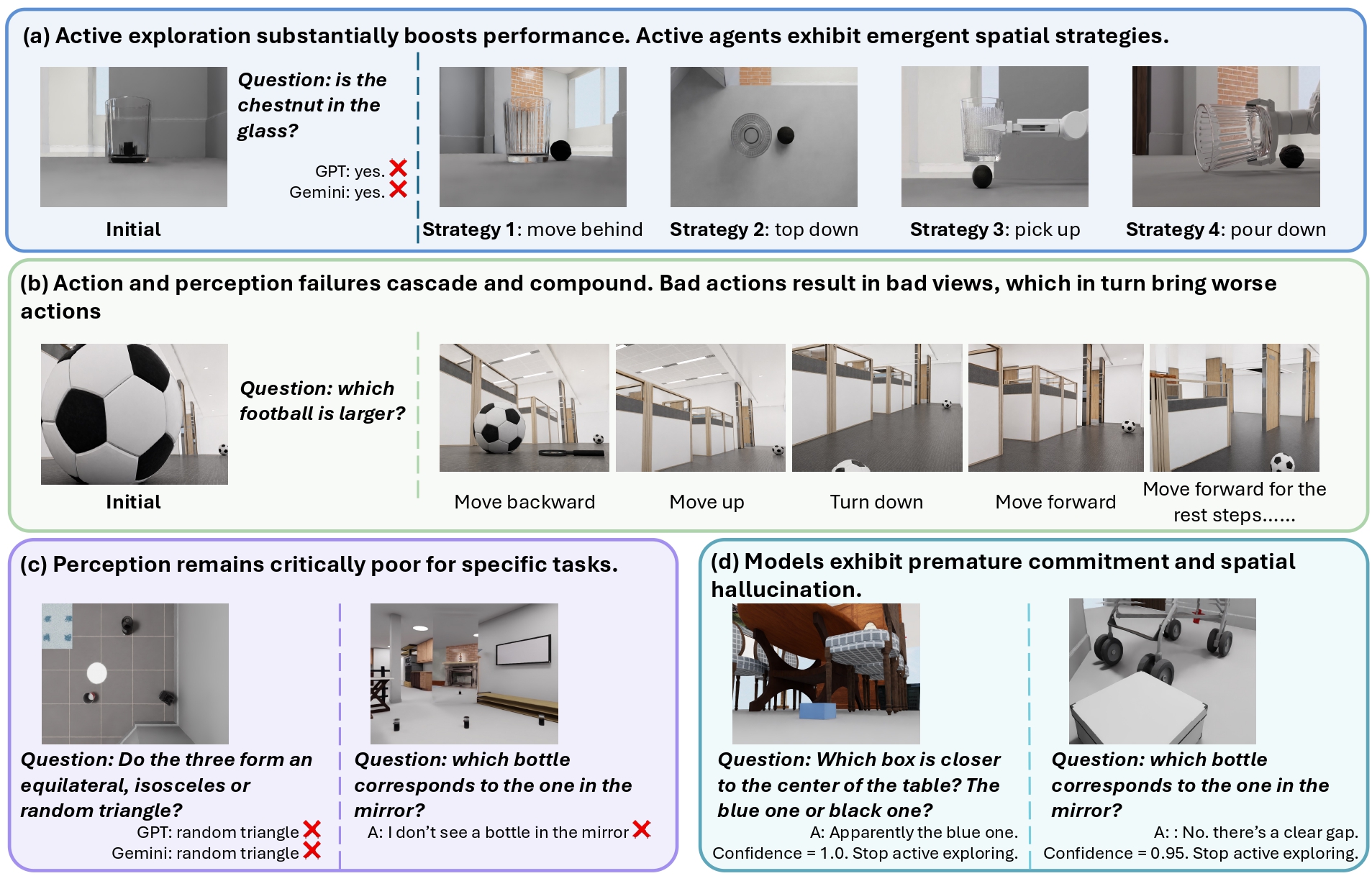

We conduct extensive experiments on state-of-the-art MLLMs and find that active exploration substantially outperforms passive counterparts, with agents spontaneously discovering emergent spatial strategies without explicit instruction, while passive multi-view adds noise rather than signal despite consuming far more images. Most failures stem not from weak perception but from action blindness, and their coupling drives cascading failures where bad actions produce bad views which produce worse actions. While explicit 3D grounding stabilizes reasoning on depth-sensitive tasks, imperfect reconstruction proves more harmful than 2D baselines by actively distorting spatial relations.

Human studies further reveal that, unlike humans who seek falsifying viewpoints and revise beliefs under contradiction, models commit prematurely with high confidence regardless of evidence quality, exposing a metacognitive gap that neither better perception nor more embodied interaction alone can close.

Agents are evaluated not only on what they can perceive, but on whether they know how to act to perceive it — closing the loop between observation and action.

Agents must determine which observations are worth acquiring, prioritizing task-relevant information over redundant or uninformative inputs.

Agents must reason through incomplete or misleading observations to infer hidden spatial structures and physical constraints beyond what is directly observed.

A worked task instance ("put away the comic book, green onion, trash bag") alongside ESI-Bench's unified action vocabulary spanning locomotion, perception, and manipulation.

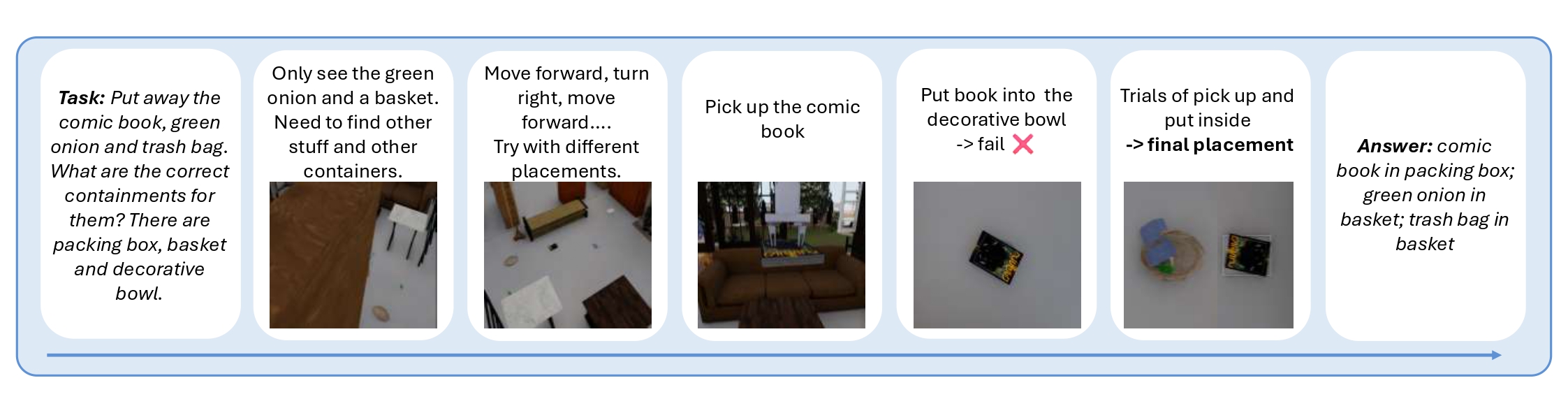

Average number of active steps to reach a correct answer for GPT-5 (solid) vs. Gemini 3.1 (outlined), grouped by task category.

Emergent strategies, cascading failures, hard perceptual ceilings, and premature commitment with spatial hallucination.

Distribution of task instances across the 10 categories, organized around Spelke's four core knowledge systems.

| Subcategory (GPT-5) | Passive Single | Passive Multi | Active | GT Passive |

|---|---|---|---|---|

| Partial Occlusion | 30.5 | 32.9 | 62.4 | 91.5 |

| View Hallucination | 11.7 | 20.2 | 60.1 | 87.8 |

| Material Transparency | 30.3 | 36.7 | 66.1 | 96.3 |

| Rigid Containment | 45.0 | 42.5 | 42.5 | 95.0 |

| Stacking & Stability | 34.8 | 37.1 | 62.9 | 86.5 |

| Counting w/ Occlusion | 3.3 | 3.3 | 13.3 | 56.7 |

| Structural Enclosure | 5.0 | 10.0 | 22.5 | 67.5 |

| Physical Contact | 40.0 | 41.7 | 64.2 | 90.0 |

| Dimensional Size | 42.5 | 44.9 | 67.7 | 80.3 |

| Unobserved Change | 40.5 | 41.2 | 51.4 | 77.0 |

Table 1. Accuracy (%) of GPT-5 across four paradigms on representative subcategories. Active exploration consistently outperforms passive multi-view; the large remaining gap to GT Passive isolates failures of action selection from failures of perception. Full results across all 29 subcategories and 4 paradigms (GPT-5, Gemini 3.1, VGGT+Gemini, GT 3D+Gemini, Human) appear in the paper.

Each category targets a distinct spatial faculty structurally inaccessible to passive sensing. Across all categories, the correct answer emerges not from any single image but from the agent's capacity to act selectively and reason over the result.

Plan placement of multiple objects across multiple containers.

Compare liquid-holding capacity across containers.

Decide whether a deformable container conforms to an object.

Predict object motion and stability on slopes.

Whether objects stack or balance given shape, mass, and geometry.

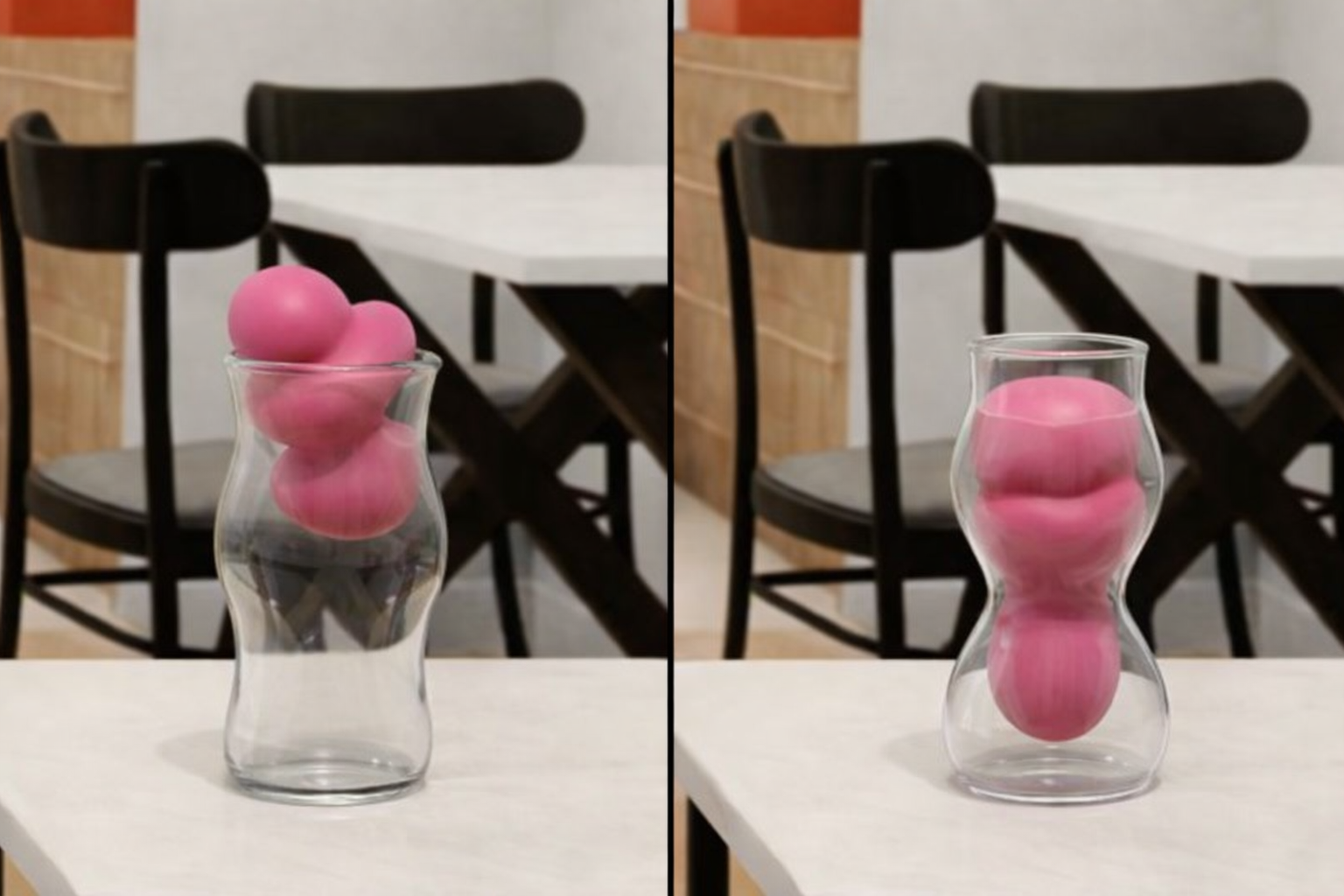

Distinguish real objects from mirror reflections.

Infer relations across mirror and real-world views.

Identify which objects appear in the mirror given the real scene.

Reason about objects hidden behind other scene elements.

Detect objects whose visibility changes critically with viewing angle.

Reason about objects seen through transparent surfaces.

Compare relative sizes of objects across vantage points.

Compare relative distances with respect to a reference object.

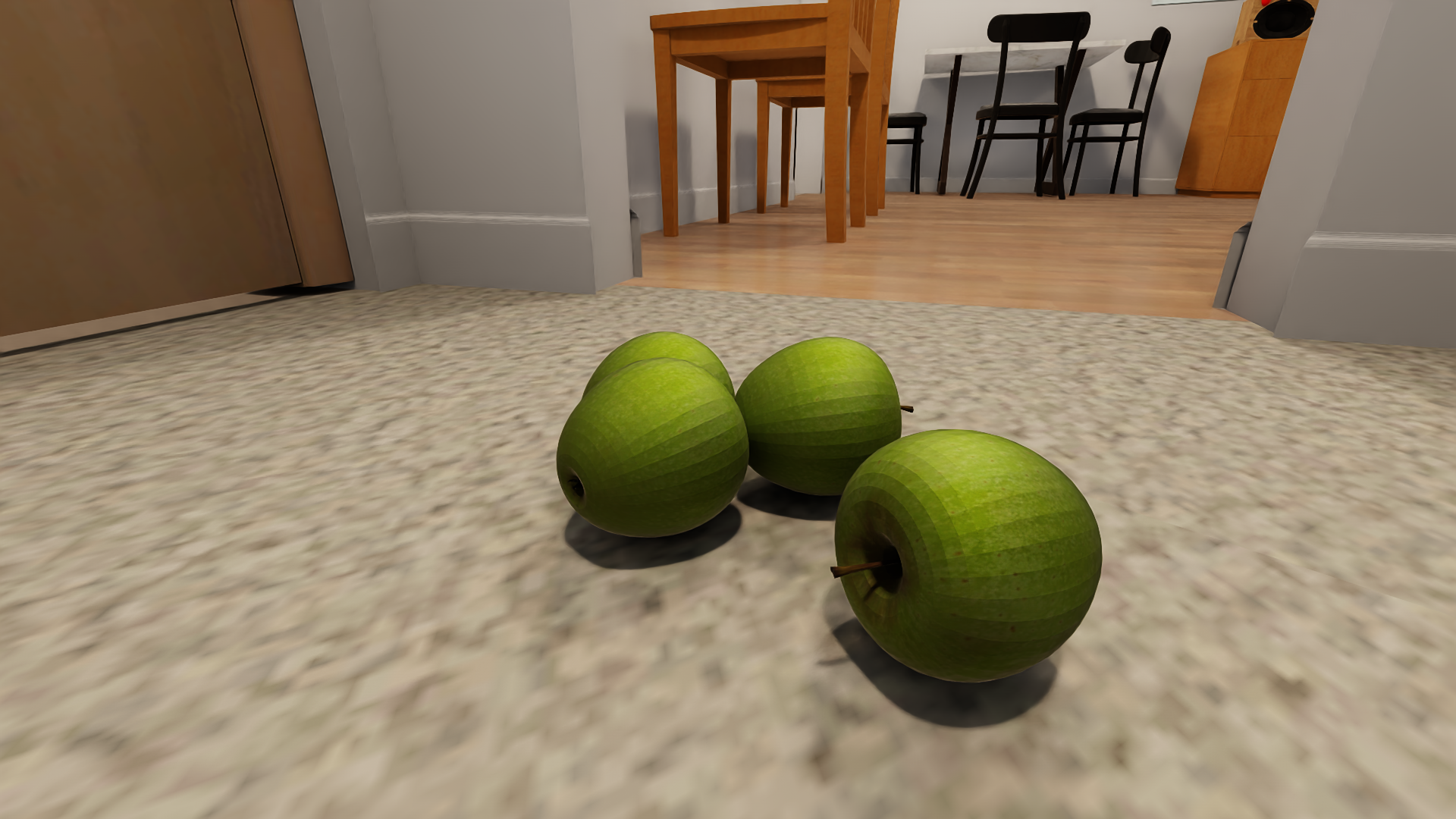

Count objects partially obscured by other scene elements.

Count objects separated across distinct spatial regions.

Count visually similar objects requiring fine-grained distinction.

Count groups that appear visually merged from a single view.

Count objects under challenging or non-uniform lighting.

Count objects hidden within enclosed or covered spaces.

Whether objects are arranged along a common axis.

Identify the shape formed by a set of objects (e.g., equilateral triangle).

Detect whether two or more objects are in direct contact.

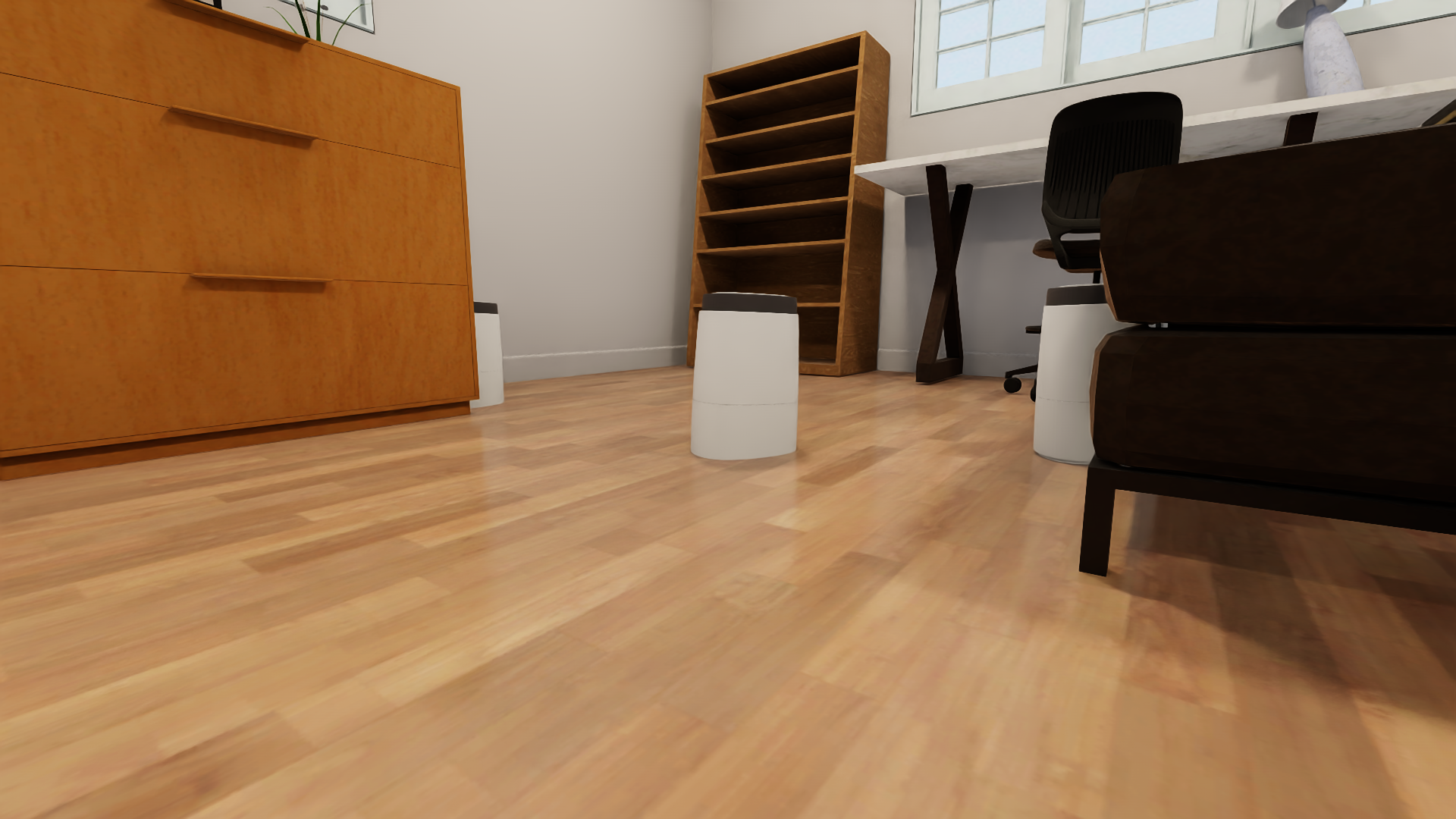

Whether two locations or regions are mutually reachable.

Identify navigable corridors or passageways between regions.

Identify and delineate distinct functional spatial regions.

Plan multi-step navigation toward a distant goal.

Infer scene changes that occurred during an unobserved interval.

Reason about scene dynamics induced by other agents.

Determine the correct procedural ordering of an action sequence.